Hello.

I'm using the NRF52832 with I2S module connected to TLV320DAC from TI. I've configured the DAC and I2S module successfully. I'm getting audio output (simple sine wave read from a table) but there is an impulse glitch in the audio signal. The glitch appears to occur at the end of every I2S buffer send. When I set the I2S TX buffer size to 250 samples, I see a glitch in the audio at the corresponding time interval. For example, 250 buffer size at 41666 sample rate, causes a glitch in the audio every 0.006 seconds. When I increase the I2S buffer size, the glitch period increases correspondingly.

I've checked my sine table interpolation algorithm. It looks good. I believe the problem is with the NRF52832 and not the DAC. The I2S buffer size is the only variable which seems to affect the audio glitch. Oh, I also tried using I2S slave mode where I program the DAC to send the I2S clocks to the NRF chip. That did not change the glitch though.

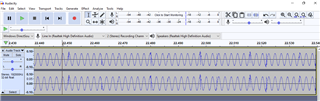

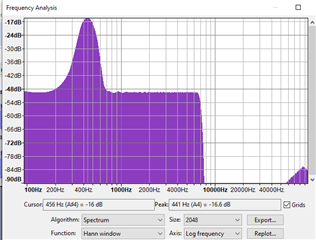

I recorded the audio output in Audacity. Take a look at the waveform and frequency spectrum...

\