Hi everyone,

We are using nRF52832, S132 with nRF5_SDK_17.1.0_ddde560 SDK. Our application collects data from a sensor via I2C and then sends this data to a smartphone via BLE. The sensor has an internal FIFO buffer, which takes about 80ms to fill up. We use an app timer of 60ms, which will send a TWI command to the sensor to read how many samples are available in the buffer, and then read the burst of data from the sensor buffer back to the MCU. So far, we implemented blocking TWI commands to read and write from the sensor, which works flawlessly.

Since our app prioritizes low consumption, we want to implement non-blocking TWI. So far, we have implemented it successfully as it can read data from sensors and we see a significant reduction in power consumption before and after implementing this change. However, there is a very weird issue arises after the change. Once we power reset the device, the first round of dataset collection goes perfectly fine. We make our computer app to visualize the data received via BLE and it looks exactly the waveform we want it to be. However, if we terminate this dataset and start a new one (which will send the command to stop and restart the sensor), the first few seconds of the dataset will show weird data on the graph. From our experience, this data should be 1 single data point being sent multiple times. It is like expecting a sinusoidal waveform, but you see a staircase signal instead. This issue only lasts for about 3-4 seconds and it goes away and the dataset will run without this issue again. However, if we terminate this dataset and start another new one, this issue will happen again for the first 3-4 seconds. Following dataset may or may not have this issue, however, the chance runs into this issue for any dataset after the first dataset is high (90% chance). Only the first dataset after the power reset won't have this issue at all.

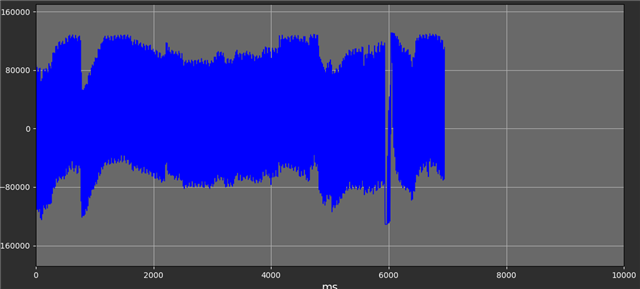

Below is how the normal signal should look like

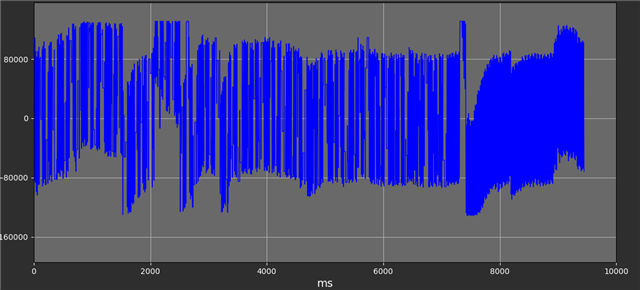

Below is how the signal looks like when this issue happens. The x-value is ms so each BLE packet will take a very small part of the graph (60ms). Those tiny ripples before 7000 x-value are made of the micro horizontal lines, which are due to 1 single datapoint filled up the whole BLE packet, instead of multiple data points from sensor sending to the computer to create a smooth graph.

We have done many tests and ensured it is not because of another i2c slave. There is a 1000-byte local buffer on the MCU that sits between the sensor buffer and the TX buffer of the BLE packet. This buffer is to package the data from the sensor into the format we want before sending it to the smartphone via BLE packet. This buffer is also clear at the beginning of a dataset so local buffer should not be the reason. We also ensure BLE signal strength is good during the test. Sharing the same hardware and almost similar firmware (non-blocking TWI vs blocking TWI), we cannot understand why we have this issue and how we can solve this problem as we have aimed at any possible culprit we can think of, including BLE, timer. TWI priority is APP_IRQ_PRIORITY_LOW_MID. BLE parameters have more than enough bandwidth to handle the BLE packet at the sensor data rate.