We are experiencing critical data integrity issues on the BLE link of our gateway device. The problem occurs specifically when the device is operating as a BLE-to-Wi-Fi bridge and is under heavy load.

The scenario is a large file transfer (approx. 800KB) from a BLE node. When our gateway receives this data via GATT notifications (using an application-level SAR protocol), it simultaneously uploads it to a remote server via a Wi-Fi TCP connection. When both data streams (BLE-in and Wi-Fi-out) are active, the BLE link becomes unstable, logging numerous errors.

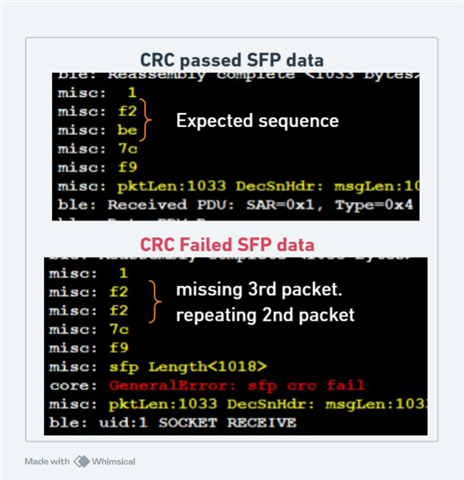

Upon debugging, we noticed that the GATT notification segments are in out of order at this point. For example, if we are expecting packets in sequence of (A1, A2, A3, A4), during error the sequence will be (A1, A2, A2, A4). one of the packet will repeat and seems to be replacing next packet.

Observed Behavior & Key Correlations

We have isolated three distinct sets of logs that, when correlated by their timestamps, show a clear pattern of failure.

1. Gateway Application Log :During the transfer, our gateway's application log records a high number of critical errors related to our SAR (Segmentation and Reassembly Protocol). The failures appear to start with packet corruption, which then breaks the segmentation logic:

sfp crc fail: This error occurs multiple times, indicating the gateway is receiving corrupted BLE packets.Reassembly buffer overflow/out of order seg skipped: These logs indicate our SAR protocol is failing. We believe this is because the preceding packet corruption (sfp crc fail) creates a "hole" in the expected packet sequence. When the next valid packet arrives, the SAR logic sees it as "out of order," which breaks the reassembly process.

2. Node Log :Logs from the sending BLE node correlate directly with our gateway's internal errors.

SFP Nack Retry: This is logged on the Node almost immediately after our gateway logs asfp crc fail.SFP Timeout Retry: This occurs after the gateway's SAR protocol fails (e.g., after theout of order seg skippedlog), suggesting our gateway received Continution Segment instead of Last segment.

3. Gateway Wi-Fi/TCP Log (Suspected Correlated Event):Logs from the gateway's internal TCP stack show that at the exact same time as the BLE CRC failures and SAR errors, the Wi-Fi radio is under maximum load.

tcp_window_full: The Wi-Fi send buffer is full, indicating a high-throughput transfer.ca dup_ack: The TCP stack is busy handling packet loss and retransmissions on the Wi-Fi/Internet side.

- RTT logs.txt

06 2016/01/01 02:06:58.6 misc: RESET: 2016/01/01 02:06:58 BT Provisioned success 007 2016/01/01 02:06:58.6 bt: off 008 2016/01/01 02:06:58.6 mqtt: disconnected 009 2016/01/01 02:06:58.7 bt: scan deferred 010 2016/01/01 02:07:00.1 bt: scan 011 2016/01/01 02:07:03.1 bo: Boot call 012 2016/01/01 02:07:03.1 mqtt: bt_start 013 2016/01/01 02:07:03.1 bt: adv deferred 014 2016/01/01 02:07:10.1 bt: off 015 2016/01/01 02:07:11.1 bt: advertise 016 2016/01/01 02:07:11.1 bt: Proxy enabled already 017 2016/01/01 02:07:11.1 bt2: Start proxy 018 2016/01/01 02:07:11.1 bt2: Enable Mesh 019 2016/01/01 02:07:11.1 bt2: Adv Tx Power 8 020 2016/01/01 02:07:11.1 bt: adv iBeacon BB 59E 021 2016/01/01 02:07:11.1 bt2: Adv repeat 314C 022 2016/01/01 02:07:11.1 mqtt: bt_connect 023 2016/01/01 02:07:11.6 bt: GAP conn handle 0 024 2016/01/01 02:07:11.6 bt2: set_connect_flag 1 025 2016/01/01 02:07:11.6 bt: GAP RSSI -66 026 2016/01/01 02:07:11.6 bt2: set_connect_flag 3 027 2016/01/01 02:07:12.3 bt: bspReadySet 1 028 2016/01/01 02:07:12.3 bt2: set_connect_flag 7 029 2016/01/01 02:07:12.3 mqtt: Connect 030 2016/01/01 02:07:12.3 bt2: Tx L21 15,04,04,01,00,3C,35,39,35,33 031 2016/01/01 02:07:12.3 bt2: Mesh disable supressed 032 2016/01/01 02:07:12.3 bt: connected 033 2016/01/01 02:07:13.1 bt: node id deferred 034 2016/01/01 02:07:25.7 bt2: Rx L5 05,02,35,C7,2C 035 2016/01/01 02:07:25.7 mqtt: GWINFO C72C 35 036 2016/01/01 02:07:31.9 bt2: Rx L3 03,05,00 037 2016/01/01 02:07:31.9 mqtt: CONNACK 038 2016/01/01 02:07:31.9 mqtt: Register /wear/v2/data/595315500014389 039 2016/01/01 02:07:31.9 bt2: Tx L35 23,0A,00,00,00,01,2F,77,65,61 040 2016/01/01 02:07:32.1 bt2: Rx L7 07,0B,00,01,00,01,00 041 2016/01/01 02:07:32.1 mqtt: REGACK 1 042 2016/01/01 02:07:32.1 mqtt: Register /wear/v2/cs/595315500014389 043 2016/01/01 02:07:32.1 bt2: Tx L33 21,0A,00,00,00,02,2F,77,65,61 044 2016/01/01 02:07:32.2 bt2: Rx L7 07,0B,00,02,00,02,00 045 2016/01/01 02:07:32.2 mqtt: REGACK 2 046 2016/01/01 02:07:32.2 mqtt: Register /wear/v1/time/595315500014389 047 2016/01/01 02:07:32.2 bt2: Tx L35 23,0A,00,00,00,03,2F,77,65,61 048 2016/01/01 02:07:32.2 bt2: Rx L7 07,0B,00,03,00,03,00 049 2016/01/01 02:07:32.2 mqtt: REGACK 3 050 2016/01/01 02:07:32.2 mqtt: Register /wear/v2/fwu/595315500014389 051 2016/01/01 02:07:32.2 bt2: Tx L34 22,0A,00,00,00,04,2F,77,65,61 052 2016/01/01 02:07:32.3 bt2: Rx L7 07,0B,00,04,00,04,00 053 2016/01/01 02:07:32.3 mqtt: REGACK 4 054 2016/01/01 02:07:32.3 mqtt: Register /wear/v1/lwt/595315500014389 055 2016/01/01 02:07:32.3 bt2: Tx L34 22,0A,00,00,00,05,2F,77,65,61 056 2016/01/01 02:07:32.3 bt2: Rx L7 07,0B,00,05,00,05,00 057 2016/01/01 02:07:32.3 mqtt: REGACK 5 058 2016/01/01 02:07:32.3 mqtt: Register /wear/v1/stat/595315500014389 059 2016/01/01 02:07:32.3 bt2: Tx L35 23,0A,00,00,00,06,2F,77,65,61 060 2016/01/01 02:07:32.4 bt2: Rx L7 07,0B,00,06,00,06,00 061 2016/01/01 02:07:32.4 mqtt: REGACK 6 062 2016/01/01 02:07:32.4 mqtt: Register /wear/v1/sfp/595315500014389 063 2016/01/01 02:07:32.4 bt2: Tx L34 22,0A,00,00,00,07,2F,77,65,61 064 2016/01/01 02:07:32.5 bt2: Rx L7 07,0B,00,07,00,07,00 065 2016/01/01 02:07:32.5 mqtt: REGACK 7 066 2016/01/01 02:07:32.5 mqtt: Subscribe Q0 /mqcp/ppm/v2/data/595315500014389 067 2016/01/01 02:07:32.5 bt2: Tx L38 26,12,00,00,08,2F,6D,71,63,70 068 2016/01/01 02:07:32.9 bt2: Rx L8 08,13,00,00,08,00,08,00 069 2016/01/01 02:07:32.9 mqtt: SUBACK 8 070 2016/01/01 02:07:32.9 mqtt: Subscribe Q1 /mqcp/ppm/v2/cs/595315500014389 071 2016/01/01 02:07:32.9 bt2: Tx L36 24,12,20,00,09,2F,6D,71,63,70 072 2016/01/01 02:07:33.3 bt2: Rx L8 08,13,20,00,09,00,09,00 073 2016/01/01 02:07:33.3 mqtt: SUBACK 9 074 2016/01/01 02:07:33.3 mqtt: Subscribe Q0 /mqcp/ppm/v1/time/595315500014389 075 2016/01/01 02:07:33.3 bt2: Tx L38 26,12,00,00,0A,2F,6D,71,63,70 076 2016/01/01 02:07:33.7 bt2: Rx L8 08,13,00,00,0A,00,0A,00 077 2016/01/01 02:07:33.7 mqtt: SUBACK 10 078 2016/01/01 02:07:33.7 mqtt: Subscribe Q1 /mqcp/ppm/v2/fwu/595315500014389 079 2016/01/01 02:07:33.7 bt2: Tx L37 25,12,20,00,0B,2F,6D,71,63,70 080 2016/01/01 02:07:34.1 bt2: Rx L8 08,13,20,00,0B,00,0B,00 081 2016/01/01 02:07:34.1 mqtt: SUBACK 11 082 2016/01/01 02:07:34.1 mqtt: Subscribe Q0 /mqcp/ppm/v1/sfp/595315500014389 083 2016/01/01 02:07:34.1 bt2: Tx L37 25,12,00,00,0C,2F,6D,71,63,70 084 2016/01/01 02:07:34.5 bt2: Rx L8 08,13,00,00,0C,00,0C,00 085 2016/01/01 02:07:34.5 mqtt: SUBACK 12 086 2016/01/01 02:07:34.5 mqtt: ready 087 2016/01/01 02:07:34.5 bo: Connected, rssi -66, mtu 236, gw addr 0xC72C 088 2016/01/01 02:07:34.5 bo: Send Status 089 2016/01/01 02:07:34.5 mqtt: Publish_send L24 Q1 T6 090 2016/01/01 02:07:34.5 mqtt: Publish 01,56,85,DF,66,62,02,00,00,00 091 2016/01/01 02:07:34.5 bt2: Tx L31 1F,0C,20,00,06,00,0D,01,56,85 092 2016/01/01 02:07:34.5 mqtt: publishing 093 2016/01/01 02:07:34.9 bt2: Rx L7 07,0D,00,06,00,0D,00 094 2016/01/01 02:07:34.9 mqtt: PUBACK 095 2016/01/01 02:07:34.9 mqtt: ready 096 2016/01/01 02:07:34.9 bo: Uploading 3584 Events bytes 097 2016/01/01 02:07:34.9 mqtt: Publish_send L68 Q0 T1 098 2016/01/01 02:07:34.9 mqtt: Publish 00,36,06,79,27,B3,00,00,56,85 099 2016/01/01 02:07:34.9 bt2: Tx L75 4B,0C,00,00,01,00,0E,00,36,06 100 2016/01/01 02:07:34.9 mqtt: ready 101 2016/01/01 02:07:39.3 bt: bspReadySet 0 102 2016/01/01 02:07:39.3 bt2: Stop proxy 103 2016/01/01 02:07:39.3 bt2: Disable Mesh 104 2016/01/01 02:07:39.3 bt: off 105 2016/01/01 02:07:39.3 mqtt: disconnected 106 2016/01/01 02:07:39.3 core: GeneralError: 1974 Disconnect during Events 107 2016/01/01 02:07:39.3 bo: call completed with failures 108 2016/01/01 02:07:41.1 bt: node_id_advertise 109 2016/01/01 02:07:41.1 bt2: Enable Proxy 110 2016/01/01 02:07:41.1 bt2: Start proxy 111 2016/01/01 02:07:41.1 bt2: Enable Mesh 112 2016/01/01 02:07:41.1 bt2: Adv Tx Power 8 113 2016/01/01 02:07:41.1 bt: adv iBeacon FE 7500059E 114 2016/01/01 02:07:41.1 bt2: Adv repeat 314C 115 2016/01/01 02:08:08.1 bt2: Stop proxy 116 2016/01/01 02:08:08.1 bt2: Disable Mesh

087 2025/11/03 05:47:16.44 misc: 1 088 2025/11/03 05:47:16.44 misc: 85 089 2025/11/03 05:47:16.44 misc: 85 090 2025/11/03 05:47:16.44 misc: 3b 091 2025/11/03 05:47:16.44 misc: 5e 092 2025/11/03 05:47:16.44 misc: sfp Length<1001> 093 2025/11/03 05:47:16.44 core: GeneralError: sfp crc fail 094 2025/11/03 05:47:38.00 misc: 1 095 2025/11/03 05:47:38.00 misc: e6 096 2025/11/03 05:47:38.00 misc: e7 097 2025/11/03 05:47:38.01 misc: 1f 098 2025/11/03 05:47:38.01 misc: sfp Length<1015> 099 2025/11/03 05:47:38.01 core: GeneralError: sfp crc fail 100 2025/11/03 05:47:38.01 core: GeneralError: uid:1 MQTTSN MLen 1030 785 101 2025/11/03 05:48:28.66 misc: 1 102 2025/11/03 05:48:28.66 misc: 74 103 2025/11/03 05:48:28.66 misc: 74 104 2025/11/03 05:48:28.66 misc: 74 105 2025/11/03 05:48:28.66 misc: f9 106 2025/11/03 05:48:28.66 misc: sfp Length<1007> 107 2025/11/03 05:48:28.66 core: GeneralError: sfp crc fail 108 2025/11/03 05:48:37.98 misc: Unsubscribed handle <0x0012> 109 2025/11/03 05:48:37.98 misc: BLE Disconnected <22>