Hi,

our device has current limitations in the range of 1mA. We were examining several ways to limit the actual connection parameters to keep current consumption low. But one will find always central devices which do not do it the "expected" way.

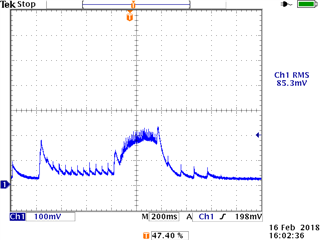

So the current idea is to catch current peaks thru omitting connection intervals thru slave latency.

Unfortunately the communication is bidirectional, so the mice example does not really fit.

I'm wondering now, if the following setup is legal, because documentation is not very clear about this and tests showed that it might work:

- establish the connection with e.g. connection interval=7.5ms and latency=0

- if current/capacitors are ok, allow communication

- if there is a current peak detected, delay communication

"allow communication" is done by "sd_ble_opt_set( BLE_GAP_OPT_LOCAL_CONN_LATENCY, latency=0 )", "delay communication" is done by "sd_ble_opt_set( BLE_GAP_OPT_LOCAL_CONN_LATENCY, latency=3 )".

Comments / suggestions are highly welcome!

Hardy