As edge AI continues to reshape the IoT landscape, Nordic Semiconductor is meeting the moment with the nRF54LM20B – a new ultra-low power wireless SoC in the nRF54L Series that integrates an Axon NPU (Neural Processing Unit) alongside the generous memory, up to 66 GPIOs, and high-speed USB you'd expect from its sibling, the nRF54LM20A.

In this blog post, we will explore what the Axon NPU brings to the table, how the nRF54LM20B fits into the broader nRF54L Series, and the different options for development on this new device, from automatic wake-word model generation in the Nordic Edge AI Lab to nRF Connect SDK support with the Edge AI Add-on.

Expanding AI capabilities

The nRF54LM20B is the first part from Nordic Semiconductor to integrate a dedicated processing core for running neural networks faster and more efficiently, so-called “AI accelerator hardware”. The Axon NPU is developed in-house by Nordic, leveraging our unique, proprietary architecture to achieve both ultra-low power consumption and high processing performance.

The introduction of the Axon NPU enables new use cases for the nRF54L Series. Audio classification, including keyword spotting (KWS) and wake-word use cases, as well as accelerated execution of more advanced LiteRT models that might yield excessive latency and inefficient CPU execution. This is a welcome addition and complements the previously launched Neuton models, ultra-efficient CPU-run edge AI models suitable for simpler edge AI applications, such as interpreting low-rate sensor data from accelerometers or PPG sensors.

Across the wireless portfolio

In addition to the nRF54LM20B, the Axon NPU is also being integrated into the nRF92 Series of cellular-IoT modules and into future SoCs supporting other wireless technologies.

New tools

To support the development of edge AI applications, new tools for both local and cloud-based workflows are needed.

Edge AI Add-on v2.0

Version 2.0 of the Edge AI Add-on for nRF Connect SDK introduces low-level Axon NPU drivers and expands the nRF Edge AI library (nrf_edgeai_lib) with Axon support. Additionally, it provides compatible code samples alongside the Axon NPU Compiler, enabling conversion of LiteRT models for execution on the Axon NPU. This follows the December v1.0 release, which added support for custom Neuton models within the nRF Connect SDK.

Axon compiler

The Axon compiler is a tool for converting AI models in .tflite or .h5 format into an Axon NPU-compatible model represented by a header file (.h) and a separate header file containing test vectors. The compiler also provides performance metrics for the model’s memory footprint, inference time, accuracy, confusion matrix, and quantization loss. The tool is available as a ready-to-use Docker image or manually built from source.

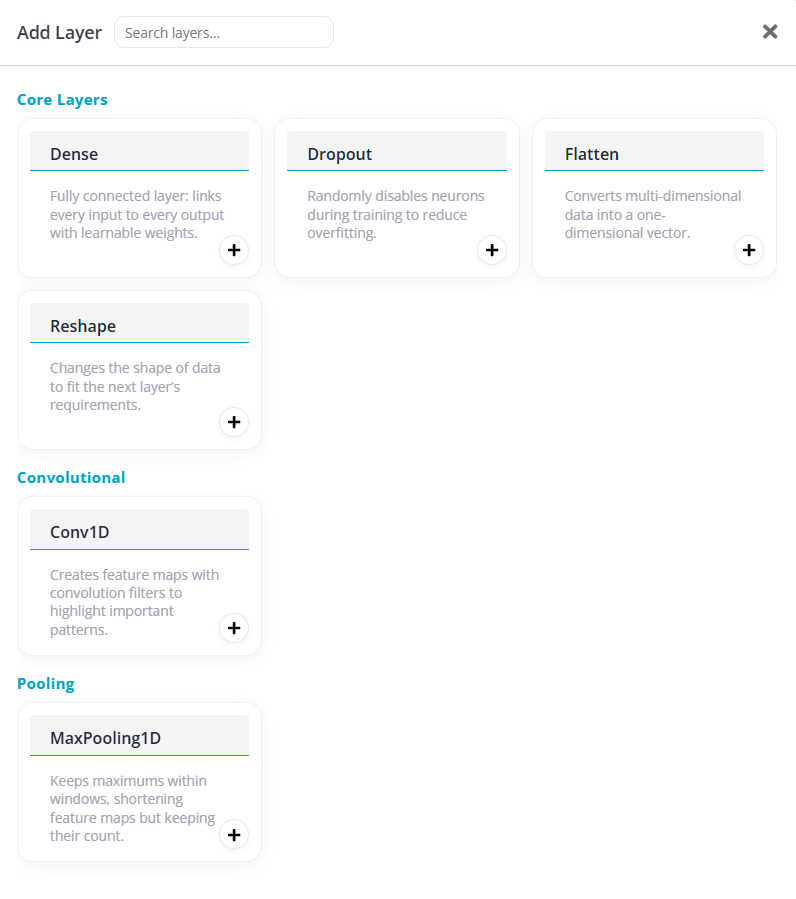

The Axon NPU natively accelerates 1D and 2D convolutions, depthwise convolutions, fully connected layers, pooling, and element-wise arithmetic – all operating on 8-bit quantized data. Operators that don’t have hardware acceleration are efficiently mapped to the Axon NPU by the compiler. Native activation functions such as ReLU and LeakyReLU execute entirely on the NPU, while additional activations like Sigmoid and Tanh are handled transparently via CPU fallback. The architecture also supports stateful inference across invocations, tensor manipulation operations like strided slice and concatenation, and softmax, giving developers the flexibility to deploy a wide variety of model topologies efficiently on-device.

Edge AI Lab

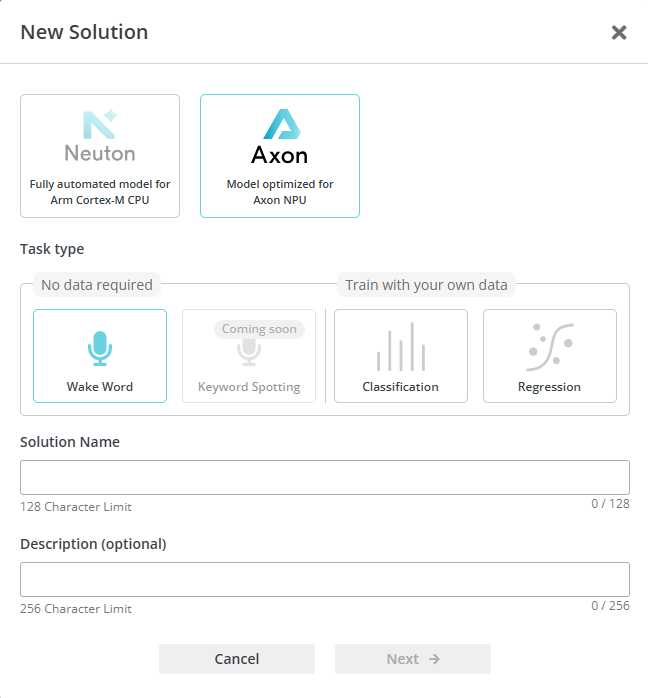

The Nordic Edge AI Lab adds two new features to support the Axon NPU, training classification or regression models based on your custom dataset, and using the automatic, no-data wake-word pipeline, dubbed “Text-to-wake-word”.

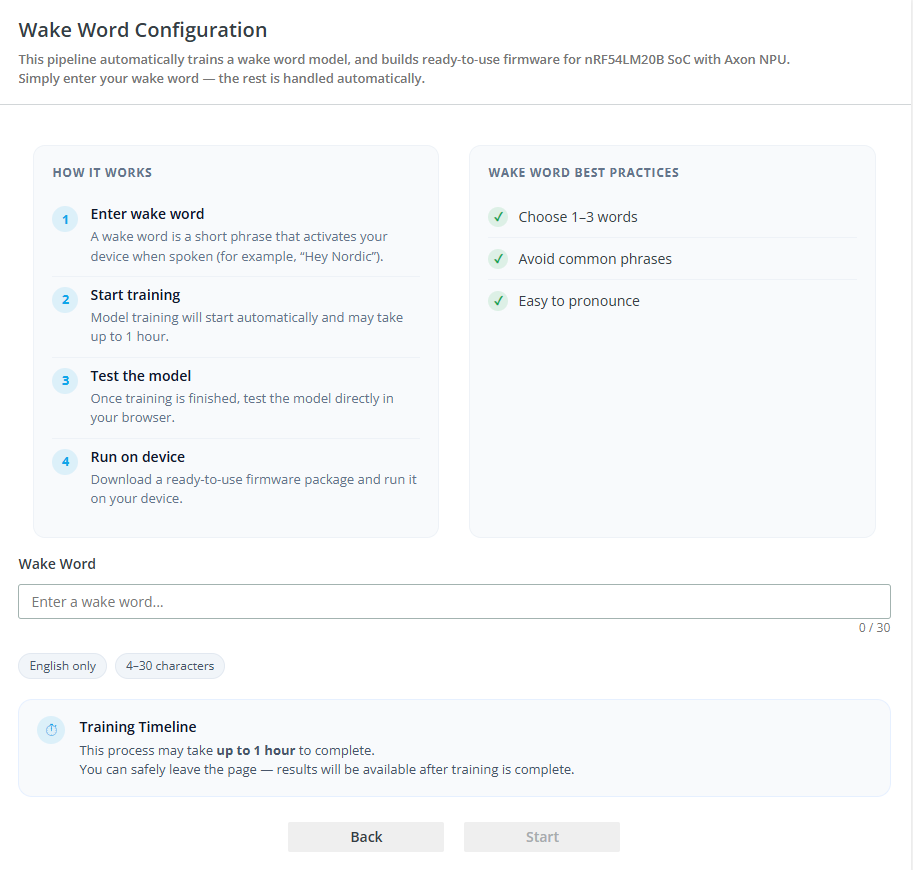

Text-to-wake-word

This feature generates wake‑word models from a single text input – such as “Okay Nordic” - removing the need to collect and label thousands of spoken samples. The Edge AI Lab automatically creates a classifier to recognize the wake word in audio, dramatically reducing data-collection time and enabling teams to evaluate voice triggers early. The resulting model can be built into nRF Connect SDK applications using the Edge AI Add-on and executed on any Axon NPU-enabled Nordic SoC, like the nRF54LM20B.

Model builder for the Axon NPU

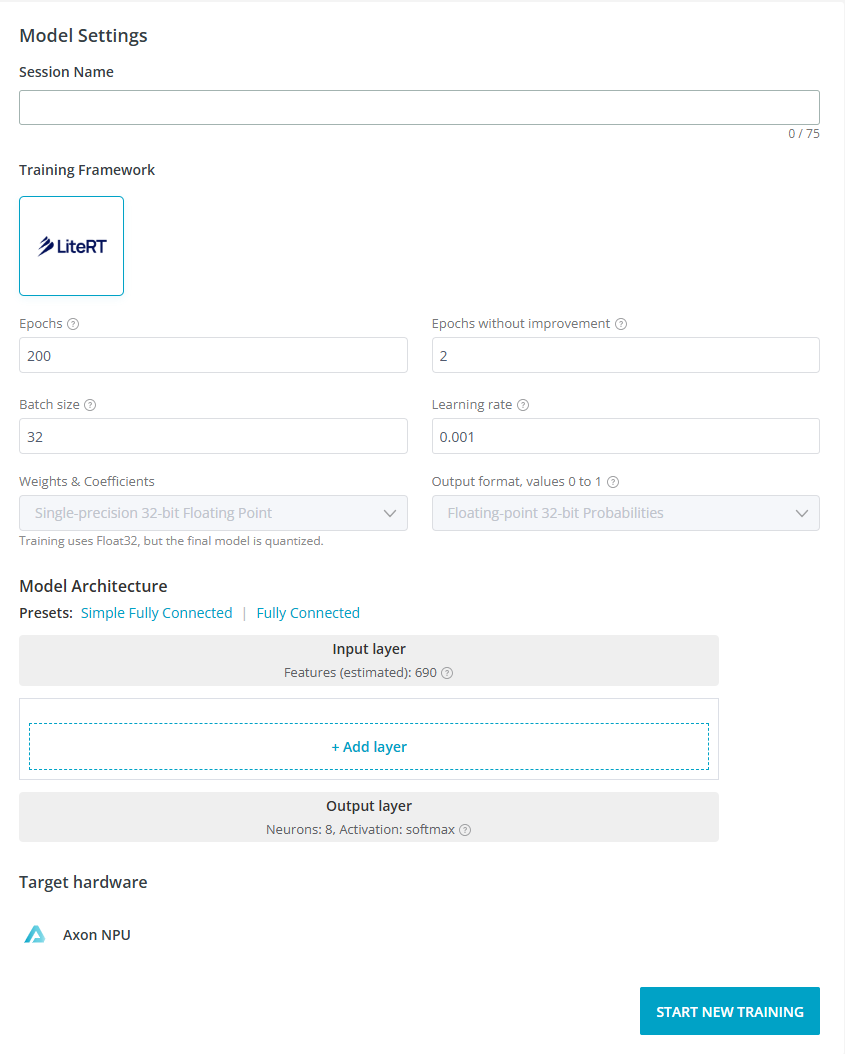

The Nordic Edge AI Lab now supports building LiteRT models for the Axon NPU, allowing you to upload your own dataset and specify the model architecture to generate classification or regression models, and then target and deploy them on the Axon NPU-integrated SoC. It complements the automated Neuton (CPU-run) flow by providing models compatible with running on the Axon NPU. On the nRF54LM20B, the integrated Axon NPU executes these TensorFlow Lite–class models with up to 15x faster inference than the CPU, making it ideal for high-rate sensor streams, audio, or imaging where throughput and efficiency matter.

The workflow of this is similar to what is offered for custom Neuton models, where the user uploads a custom dataset to create a model based on their use case for their application. The key difference is that after uploading the dataset, users must also specify an architecture before a training session can be completed.

The main part of defining a neural network's architecture is specifying the number of layers and the content of each layer. There are two presets available: “Simple Fully Connected” consists of three dense layers with 64, 32 and 16 neurons, respectively. The “Fully Connected” preset includes three dense layers of 256, 128 and 64 neurons, and dropout layers with a rate of 0.2 after each layer of neurons. The advanced user can also opt to specify their own custom architecture, based on layer blocks, operations available as a part of the Axon NPU’s feature set.

After training has completed, the model is downloaded as a header file (.h) representing the model, compiled for inference on the Axon NPU. Running these models on the CPU is not possible.

Edge Impulse support

The third-party edge AI model training platform, Edge Impulse, has also gained full Axon NPU support. Users of the platform will be able to train models in the same way they are used to, and trained models can be exported either as a library compiled for the Axon NPU architecture or as a ready‑to‑program firmware image for the nRF54LM20 DK. In other words, using the Axon compiler is not necessary to run models from Edge Impulse on the NPU.

Developing with the nRF54LM20B

Once an AI model has been generated, and optionally, if using standard LiteRT models from local TensorFlow toolchains, converted, the time has come to build your model into an SDK application. The model instance is declared in a generated header file, where all file and symbol names incorporate the model name, allowing multiple models to coexist cleanly within a single project. From there, inference is performed by invoking the correct API. Models generated by the Nordic Edge AI Lab use the abstracted nrf_edgeai_lib API, whereas models converted with the Axon NPU compiler use the low-level Axon NPU driver API directly. Once initiated, model inference executes to completion without requiring any further user involvement. Developers can choose between synchronous operation, where the call blocks until inference is complete, or asynchronous operation, where a callback function is triggered upon completion, freeing resources to perform other work in the interim.

Initial development for the nRF54LM20B is supported by the Edge AI Add-on v2.0.0, which builds on top of nRF Connect SDK v3.3.0-preview2. There is no need to install the preview release separately, it will be installed automatically with the Add-on. Note that for samples that do not require the Axon NPU, for now, the nRF54LM20A is the only available target. With the next official release of nRF Connect SDK (v3.3.0), all samples supported by the nRF54LM20A will also be explicitly supported by the nRF54LM20B as a target.

Closing

As we’ve seen, the Axon NPU brings new possibilities for edge AI to the nRF54L Series. The new development tools and unique text-to-wake-word feature in Edge AI Lab allow for easy implementation of advanced AI in ultra-low-power end devices.

Developers wanting to evaluate the nRF54LM20B SoC, can visit the nRF54LM20 DK product page for a list of distributors with the kit in stock. The DK is also used to develop applications for the nRF54LM20A SoC.

-

maxxlife

-

Cancel

-

Vote Up

0

Vote Down

-

-

Sign in to reply

-

More

-

Cancel

Comment-

maxxlife

-

Cancel

-

Vote Up

0

Vote Down

-

-

Sign in to reply

-

More

-

Cancel

Children