I am referring to the ncs\v3.1.0\nrf\samples\bluetooth\throughput\sysbuild\ipc_radio\prj.conf for the nRF5340 and extrapolating that to a central application with multiple peripheral connections. In that case, RAM use on the network core becomes a concern.

1. Why does the sample have such a large heap size (CONFIG_HEAP_MEM_POOL_SIZE=8192)? I am not aware of any k_malloc() use in the Throughput sample and ipc_radio.

* Yes, we can use a "minimal.conf", but why put such a large default of 8192 in the Throughput prj.conf?

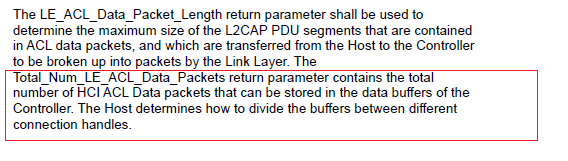

2. Why doesn't the sample suggest adjusting other configurations, notably the CONFIG_BT_CTLR_SDC_TX_PACKET_COUNT, CONFIG_BT_CTLR_SDC_RX_PACKET_COUNT, and CONFIG_BT_BUF_ACL_TX_COUNT? Couldn't these influence throughput?

* See my case 296354, where increasing CONFIG_BT_CTLR_SDC_TX_PACKET_COUNT influenced keeping the More Data (MD) bit set.

3. In that case 296354, there was a statement:

"There is no need to maintain any ratio or relationship between CONFIG_BT_CTLR_SDC_TX_PACKET_COUNT and CONFIG_BT_BUF_ACL_TX_COUNT. Also, the latter is only used by the Zephyr LL. (Generally, the difference between BT_BUF_ACL_TX_COUNT and BT_CTLR_SDC_TX_PACKET_COUNT is that the latter is per connection while the former is shared. This does not matter for a single connection, though.)"

That was for an old NCS, 2.0. I believe CONFIG_BT_BUF_ACL_TX_COUNT is indeed used by the SDC in newer NCS versions, such as NCS 2.6 and beyond. Is that correct?