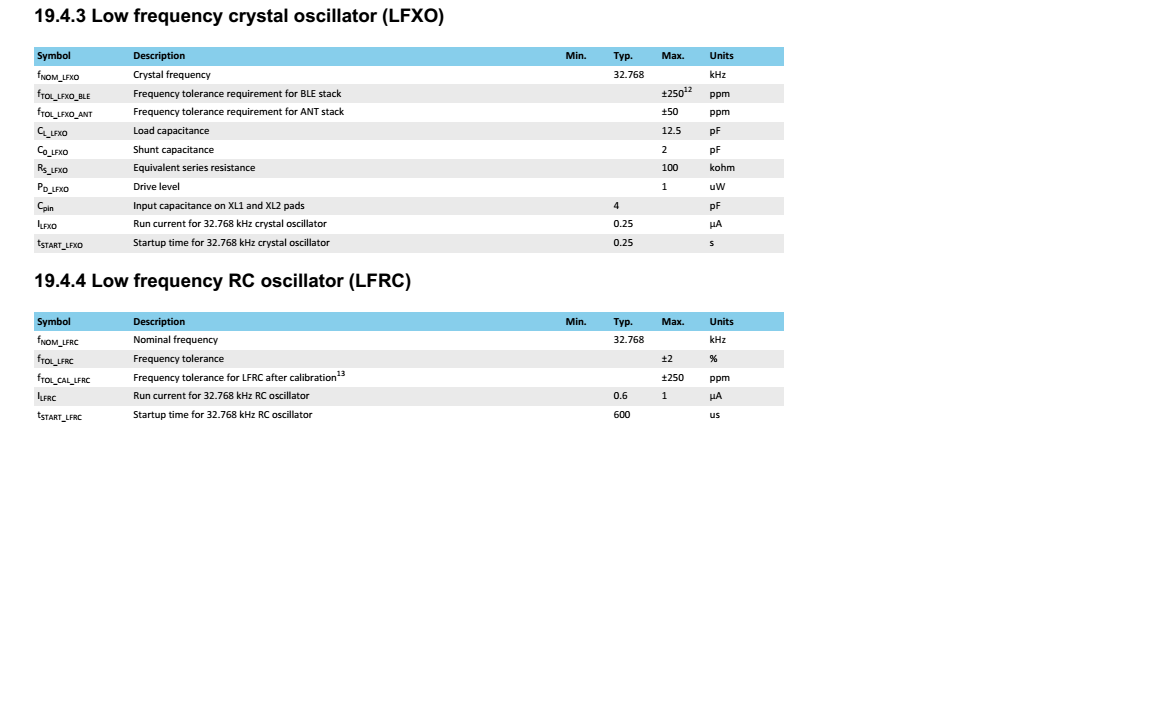

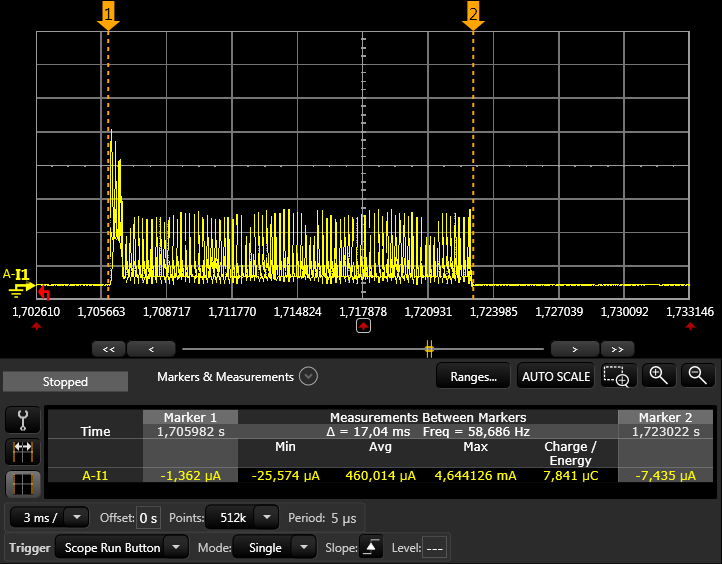

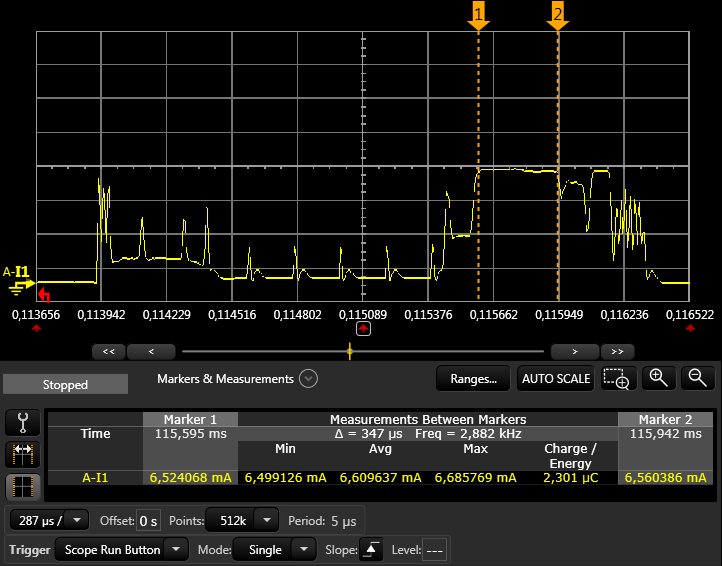

I use the nRF52832 IC of WLCSP package. In addition, it sets as follows:

{.source = NRF_CLOCK_LF_SRC_RC, \

.rc_ctiv = 16, \

.rc_temp_ctiv = 2, \

.xtal_accuracy = NRF_CLOCK_LF_XTAL_ACCURACY_250_PPM}

How much current consumption increases as compared with the case of using an external crystal ?

Also, please tell us in detail why the current consumption increases.

thank you

uba